The AI Buildout Has a Second Chapter – the Shift from Copper to Light

We know the AI trade – we’ve written about it on this blog. We bought the chipmakers. We bought the data center builders. We bought the power companies. That playbook worked — and it still has legs. But there’s a second chapter to this story that is now unfolding, and it is early in the shift. The theme is photonics, and if you’re not paying attention to it yet, you should be.

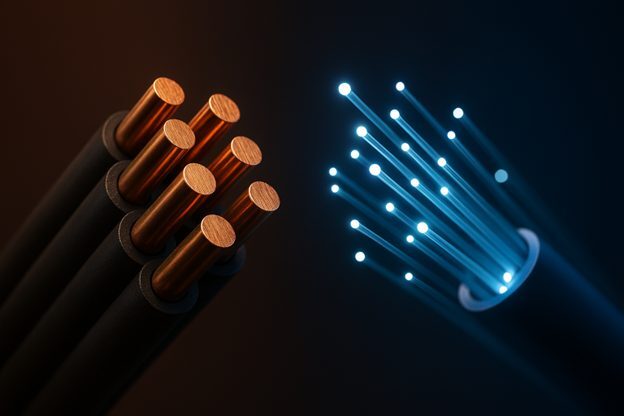

Here’s the short version. We spent Phase 1 of the AI buildout buying computing power — specifically, the GPUs (graphics processing units) that make AI model training possible. Phase 2 is about moving the data those GPUs generate. And the copper wire that currently does that job is running out of bandwidth.

This isn’t just a Wall Street thesis. Researchers in physics and engineering have been making this argument in peer-reviewed journals for years. A landmark review in Nature Photonics laid out the case for photonics (moving data via light) as the enabling technology for AI and neuromorphic computing (computers working like the human brain). A 2024 paper in Light: Science & Applications specifically addressed resource-efficient photonic networks for next-generation AI. A sponsored white paper published through the Wall Street Journal called photonics the foundation powering the future of AI infrastructure. The scientific and industry consensus has been building quietly for a while. The market is just now catching up.

Copper Hit a Wall. Literally.

This isn’t a metaphor. It’s physics. As data rates in AI clusters pushed to 224 gigabits per second per lane in late 2024, the effective reach of passive copper cable dropped to less than one meter. In a data center the size of multiple football fields, that’s basically useless for rack-to-rack communication.

To push a signal further over copper at those speeds, you have to pour in massive amounts of power for amplification. By early 2025, interconnects were consuming nearly 30% of total cluster power. That number only gets worse as clusters scale. Jensen Huang, NVIDIA CEO, said it plainly at NVIDIA’s GTC 2025 conference: “Energy is our most important commodity.” He wasn’t talking about oil. He was talking about watts lost to copper.

The industry’s answer is to replace copper with light — fiber optic connections that move data at the speed of light with a fraction of the power. Researchers in the field put it bluntly: the copper is saturated. Fiber is now moving deeper into the data center, rack by rack, because the data demands leave no other option.

The Scale of This Problem Is Hard to Overstate

I’ve written before about the energy demands of AI infrastructure, but the numbers keep getting bigger. Global data centers consumed roughly 415 terawatt-hours (TWh) of electricity in 2024 — more than 4% of all U.S. electricity, comparable to the entire annual energy demand of Pakistan. The International Energy Agency (IEA) projects that number grows to 945 TWh per year by 2030. If the AI boom accelerates faster than expected, the curve steepens.

You’re already feeling this in your utility bill. In the PJM electricity market stretching from Illinois to North Carolina, data centers accounted for an estimated $9.3 billion increase in the capacity market for 2025-26. The average residential bill in western Maryland is expected to rise $18 a month because of it. The U.S. Energy Information Administration (EIA) forecasts commercial electricity consumption growing 5% in 2026, with data centers as the primary driver.

This is the dirty secret of the AI trade: the computing power arms race isn’t just a silicon story. It’s an energy story. Photonics attacks this problem directly. Co-packaged optics (CPO) — the next-generation approach that embeds optical conversion directly onto the switch chip — can reduce rack-level power consumption by up to 40% compared to traditional pluggable transceivers. The energy picture here is something I’ve also explored in the context of the Stargate buildout. That’s the difference between a data center that can scale and one that hits a grid wall. A Photonics21 industry white paper summed it up bluntly: only photonics can satisfy AI’s appetite for computing power power. I’d say they’re right.

The Photonics Supply Chain: Start to Finish

Photonics is not one product. It’s a stack of interlocking technologies, each with its own bottlenecks, its own timeline, and its own set of companies fighting for position. Understanding that stack is how you figure out where the pricing power lives — and which companies are positioned to capture it.

Here’s how the supply chain works from raw material to data center, with the public companies that play at each layer.

| Step | What Happens | Key Companies |

| Step 0: Mining & Refining | Indium recovered as byproduct of zinc smelting. ~75% lost globally. No dedicated indium mines. | AXTI (AXT Inc.) IQE |

| Step 1: The Substrate | Indium + phosphorus = Indium Phosphide (InP) wafers. Brittle, expensive, still mostly on 6-inch while silicon fabs run 12-inch. | AXTI (AXT Inc.) IQE |

| Step 2: Epitaxial Growth | Nanometer-thin layers grown on wafer. Composition determines laser wavelength, power, efficiency. Wrong = whole wafer scrapped. | COHR (Coherent) LITE (Lumentum) TSEM (Tower Semi) |

| Step 3:Wafer Fabrication | Waveguides, laser cavities, switching structures carved into wafer. Requires dedicated photonics fab. Takes years to qualify. | COHR, LITE GFS (GlobalFoundries) TSEM POET Technologies |

| Step 4: Dicing & Yield | Wafer cut into chips. Each tested to spec. Yield hides inside gross margin. Years of refinement to improve it. | AEHR (Aehr Test Systems) FORM (FormFactor) VIAV (Viavi Solutions) KEYS (Keysight) |

| Step 5: Component Assembly | Laser chip aligned to fiber at sub-micron tolerance. Hermetically sealed. Hard to automate. Hermetic packages are their own bottleneck. | COHR, LITE FN (Fabrinet) MTSI (MACOM) POET, ALMU (Almus) |

| Step 6: Transceiver Module | Sub-assembly + DSP chip + housing = pluggable transceiver. Each unit individually tested. Testing pace limits output scale. | AAOI (Applied Optoelectronics) LITE, COHR CIEN (Ciena) NOK (Nokia) |

| Step 7: Into the Data Center | Transceiver plugs into network switch. Ultra-pure glass fiber carries signals between every switch, server, and building. | GLW (Corning) CIEN, ANET CRDO (Credo Semi) MRVL (Marvell) AVGO (Broadcom) |

| Disruptors (New Architectures) | Next-gen photonic integrated circuits, electro-optic polymers, external light source designs. Higher upside, longer timeline. | POET Technologies LWLG (Lightwave Logic) ALMU (Almus) |

| Test & Validation | Wafer-level burn-in and signal testing for silicon photonics reliability. Mission-critical before deployment. | AEHR, FORM KEYS, VIAV |

Step 4: Dicing and Yield — Where Margins Are Made or Destroyed

The finished wafer gets diced into individual chips, and each one gets tested to see if it actually works to spec. The percentage that pass is called yield, and it’s one of the most important numbers in this business — even though you won’t see it reported directly. It hides inside gross margin. Low yield means high cost per working chip. Improving it is hard-won through years of process refinement.

This is where the test and validation companies step in. Aehr Test Systems (AEHR), FormFactor (FORM), Viavi Solutions (VIAV), and Keysight (KEYS) provide the wafer-level burn-in and signal measurement equipment that ensures components meet reliability standards before they go into mission-critical AI data centers. The surge in complex silicon photonics has made rigorous testing even more essential — and more valuable. We do not own these companies, but they are on my add list.

Step 5: Component Assembly — The Hardest Manufacturing Problem in the Stack

A working laser chip still can’t be used on its own. It has to be physically aligned to an optical fiber with tolerances finer than a fraction of a micron, combined with light detectors and signal modulators, and hermetically sealed inside a protective ceramic and metal enclosure. Data centers run hot, and moisture or dust will destroy a laser chip quickly. Packaging can consume more than 80% of the total manufacturing cost of a photonic integrated circuit (PIC).

Automating this assembly process reliably at high volume remains one of the hardest open problems in the industry. Fabrinet (FN) and MACOM Technology Solutions (MTSI) are the primary manufacturing and scale partners at this layer. Almus (ALMU) is a smaller, longer-timeline bet on next-generation packaging approaches — one I’d characterize as a 2029-2030 story rather than a near-term catalyst. We previously owned FN, but sold it during a market downturn, however the others are on the add list.

Step 0 & 1: Where It All Starts — Indium and the Substrate

Everything starts with indium, an obscure metal with no dedicated mines. It’s a byproduct of zinc refining — meaning it only gets recovered when zinc smelters have the equipment and economic incentive to capture it from their waste stream. About 75% of potential global indium supply is simply lost in processing. That upstream constraint ripples through everything downstream.

Refined indium gets combined with phosphorus to create Indium Phosphide (InP), the material used to make high-performance data center lasers. Silicon — the material that runs everything else in computing — cannot generate light directly from electricity. InP can. This is why InP is strategically irreplaceable, and why the companies that supply InP wafers sit at the most critical chokepoint in the chain. AXT Inc. (AXTI) and IQE are the primary publicly traded substrate suppliers. The industry is still mostly on 6-inch InP wafers while standard silicon fabs run 12-inch — that size gap is a big reason photonics capacity is so hard to scale quickly. We do not own these companies, but they are on my watch list.

Steps 2 & 3: Epitaxial Growth and Wafer Fabrication — The Real Bottleneck

A bare InP wafer does nothing useful on its own. Engineers have to grow nanometer-thin additional layers on top of it — a process called epitaxy — where the precise chemical composition of each layer determines the laser’s wavelength, power, and efficiency. Get it slightly wrong, and the entire wafer gets scrapped. This requires specialized equipment found in very few places in the world. It’s rarely discussed publicly, but it’s one of the most critical bottlenecks in the entire supply chain.

After epitaxy, the wafer goes through dedicated photonics fabrication — waveguides, laser cavities, switching structures. Unlike standard semiconductor manufacturing, this cannot be done in a regular chip fab. It requires a dedicated photonics facility that takes years to build, qualify, and ramp. There are very few of them globally. Coherent (COHR) and Lumentum (LITE) are the established leaders here. Tower Semiconductor (TSEM) and GlobalFoundries (GFS) provide foundry backbone. POET Technologies (POET) is developing an integrated optical platform that aims to collapse several of these steps into a single manufacturing process — higher risk, but potentially transformative if it works at scale. We do not own these companies, but they are on my add list.

Step 4: Dicing and Yield — Where Margins Are Made or Destroyed

The finished wafer gets diced into individual chips, and each one gets tested to see if it actually works to spec. The percentage that pass is called yield, and it’s one of the most important numbers in this business — even though you won’t see it reported directly. It hides inside gross margin. Low yield means high cost per working chip. Improving it is hard-won through years of process refinement.

This is where the test and validation companies step in. Aehr Test Systems (AEHR), FormFactor (FORM), Viavi Solutions (VIAV), and Keysight (KEYS) provide the wafer-level burn-in and signal measurement equipment that ensures components meet reliability standards before they go into mission-critical AI data centers. The surge in complex silicon photonics has made rigorous testing even more essential — and more valuable. We do not own these companies, but they are on my add list.

Step 5: Component Assembly — The Hardest Manufacturing Problem in the Stack

A working laser chip still can’t be used on its own. It has to be physically aligned to an optical fiber with tolerances finer than a fraction of a micron, combined with light detectors and signal modulators, and hermetically sealed inside a protective ceramic and metal enclosure. Data centers run hot, and moisture or dust will destroy a laser chip quickly. Packaging can consume more than 80% of the total manufacturing cost of a photonic integrated circuit (PIC).

Automating this assembly process reliably at high volume remains one of the hardest open problems in the industry. Fabrinet (FN) and MACOM Technology Solutions (MTSI) are the primary manufacturing and scale partners at this layer. Almus (ALMU) is a smaller, longer-timeline bet on next-generation packaging approaches — one I’d characterize as a 2029-2030 story rather than a near-term catalyst. We previously owned FN, but sold it during a market downturn, however the others are on the add list.

Step 6: The Transceiver Module — Where Optics Becomes a Finished Product

The assembled optical sub-assembly goes into a housing along with a DSP (digital signal processor) chip, a circuit board, and a casing to create a pluggable transceiver — the finished module that slots into a switch or server in a data center. Each unit gets individually tested before it ships. That testing process is slow and expensive, and it’s a hidden constraint on how fast output can actually scale.

This is the most competitive layer of the stack but also the highest current volume. Applied Optoelectronics (AAOI), Lumentum (LITE), and Coherent (COHR) are the core transceiver and optical module suppliers. Ciena (CIEN) plays here as well, focused on high-capacity routing and network equipment. Nokia (NOK) is a legacy business with growing optical revenue — not a pure play, but meaningful exposure. We added CIEN after I formulated this thesis, AAOI is on the watch list.

Step 7: Into the Data Center — Systems, Fiber, and the Network Layer

The transceiver plugs into a port on a network switch, and the data moves over fiber — ultra-pure glass strands, thinner than a human hair, carrying light signals between every switch, server, and building. None of this works without the fiber infrastructure holding it all together.

Corning (GLW) is the dominant fiber glass supplier — what I’d call a necessary materials company. You cannot build the physical infrastructure without it. At the systems and silicon layer, Broadcom (AVGO), Marvell Technology (MRVL), and Credo Semiconductor (CRDO) are the adjacent silicon names that scale as optics adoption grows — they’re not pure photonics plays, but their revenue moves with the optical buildout. We own AVGO and CRDO, with GLW on the add list.

The Architecture Shift: Co-Packaged Optics

The pluggable transceiver model has dominated for years and still works. About 100 million pluggable modules are expected to ship in 2026. But the physics of AI-scale networking is pushing the industry toward CPO. Instead of a separate pluggable module converting electrical signals to light, CPO embeds that conversion directly onto the switch ASIC (application-specific integrated circuit). Signal converts to light almost immediately, before it can degrade. Power savings are significant — up to 40% reduction in rack-level draw — and bandwidth density goes up sharply.

At GTC 2025, Jensen Huang unveiled NVIDIA’s first CPO-enabled networking switches — Quantum-X and Spectrum-X — built on Taiwan Semicontor’s (TSM’s) COUPE (Compact Universal Photonic Engine) platform. Broadcom has followed with its Tomahawk 6 switch. The roadmap targets 12.8 terabits per second within processor packages before the end of the decade. CPO is not vaporware anymore. It’s in production, with volume deployment expected by 2027.

There is a real trade-off worth noting: if a pluggable optical module fails, you replace it in minutes. If a CPO optics element fails, you replace the entire switch, which takes hours. Reliability concerns have kept companies like Cisco cautious. This is a theme with real legs, but it’s a slog to get there. Patience is part of the trade.

How I Think About Investing in This Theme

What follows is how I think about constructing exposure — not a specific buy recommendation on any individual stock. The photonics supply chain is not one story. Different parts of it have different timelines, different risk profiles, and different catalysts. The market is already validating this. On March 9, 2026, the sector surged across the board — AXTI up nearly 20%, AAOI up nearly 16%, LITE up nearly 15% — reflecting structural re-rating as hyperscalers aggressively upgrade to 800G and 1.6T networking to support next-generation GPU clusters.

The way I bucket this is roughly as follows. Upstream substrate suppliers (AXTI, IQE) sit at the most critical chokepoint and have genuine pricing power when demand spikes — you cannot build the lasers without them. Established photonics leaders (COHR, LITE) have pivoted from transceiver assembly toward becoming what you might call laser foundries — critical component manufacturers rather than finished goods producers, which is a better structural position. The foundry backbone (TSEM, GFS) and manufacturing scale partners (FN, MTSI) provide the infrastructure layer that everything else runs through. Test and validation names (AEHR, FORM, VIAV, KEYS) tend to be overlooked but benefit every time complexity increases. And then there are the disruptors — POET, LWLG, ALMU — which are higher variance, longer timeline, but potentially transformative if their architectures win.

One market research estimate puts the global optical interconnect market in AI data centers at $9.94 billion in 2025, growing to $31 billion by 2033 — a compound annual growth rate (CAGR) of over 15%. Another puts sales of lasers and PICs used in optical transceivers growing from $2.4 billion in 2023 to $5.9 billion in 2029. Both estimates predate the CPO acceleration. I think the upside scenario is being consistently underpriced.

The risk I think about most is foundry concentration. TSM is currently the only foundry capable of the advanced 3D chip-stacking required for CPO. That’s a single point of failure for the global AI supply chain — with obvious geopolitical dimensions given Taiwan’s location. I’ve covered those tail risks in previous posts, and they remain relevant context for how you size any position that runs through TSM.

Beyond Connectivity: Optical Computing Power Is Coming

Right now, photonics is about moving data. But there’s a longer arc here that most investors aren’t pricing at all yet — using light to actually generate computing power. And this is where the academic research gets genuinely exciting.

In December 2024, researchers at MIT demonstrated a fully integrated photonic processor capable of performing all the key computations of a deep neural network entirely with light, on a single chip. No off-chip electronics required. The implications for speed and energy efficiency are significant — photonics can perform these operations at the speed of light, and without generating the heat that makes electrical computing so expensive to cool.

In April 2025, Lightmatter, a U.S. startup that builds photonic computing hardware and interconnects for AI data center, went further, publishing results in Nature describing what they call universal photonic AI acceleration — a photonic processor capable of performing the matrix multiplication operations at the heart of AI inference using light. Their blog post on the research frames the stakes clearly: Moore’s Law, Dennard scaling, and memory scaling have all effectively stalled. The only way to keep delivering AI performance gains without exponential cost increases is a fundamentally different approach to computing power. Lightmatter is betting that approach is photonic.

These aren’t isolated data points. A review paper in Science Bulletin covered analog optical computing for AI. A PubMed-indexed study addressed integrated neuromorphic photonic computing. The NEUROPULS project at the European level is developing neuromorphic architectures built on augmented silicon photonics platforms. Applied Physics Reviews published a piece on how AI and photonics are now a two-way street — AI is being used to design better photonic components at the same time photonics is being developed to accelerate AI. The research community has been building toward this for years. The commercial layer is just getting started.

Conclusion

I want to be clear-eyed about the timeline here. Optical computing power is not a 2026 story. It’s not even clearly a 2028 story. Lightmatter’s own team acknowledges their near-term commercial focus is on interconnects — specifically, solving the problem of connecting millions of chips — while the photonic computing power work is the longer horizon. But that’s exactly the kind of layered thesis I think about when I’m trying to understand where a theme goes over a decade, not just a quarter.

The AI infrastructure buildout has layers. Most investors have priced the computing power layer. The photonics interconnect layer is getting priced now. The optical computing power layer is still early. Understanding where you are in that stack — and sizing exposure accordingly — is how I think about managing a position in this theme.

If you’re not currently a BCNA client and want to discuss how we’re thinking about positioning in themes like this one, I’d encourage you to reach out to Joel Wallace at (217) 351-2870 or [email protected] to have a conversation.

–Mark

Disclaimer: This post is for informational purposes only and should not be considered investment advice. The views expressed are my own analysis and opinions. Every investor’s situation is different, and you should conduct your own due diligence before investing in anything. You should consult with a qualified financial professional, like ourselves, before making any investment decisions. Past performance does not guarantee future results.

Credit beyond specific links above goes to Crux Capital Group.